Some of the most flexible aspects of Interoute’s VDC can be considered both strong points and weak points. It’s great to be able to create subnets and VLANs with a single click, and then effortlessly connect them to either the Internet or a private WAN, but sometimes the flexibility afforded allows one to forget fundamental primitives, such as KISS, and shoot oneself in the foot.

In some of the engagements I’ve worked on, I’ve seen virtual network diagrams that could really win awards on Rate My Network Diagram-type web sites, but they may not be appropriate for mission-critical apps in cloud networks where simplicity and reliability matters most.

Tech Editor’s Note For those unfamiliar, Rate My Network Diagram was a fun community-based web site for sharing – and likely shaming – complex network diagrams between engineers. Google’s image cache offers us a glimpse of what might have made it there:

Part of the problem is that we’re still very used to designing networks based upon discrete physical hardware that has its own costs, capabilities and limitations. But network virtualisation changes the rules, and makes some of our previous assumptions look foolish and wrong.

Here I present some guidelines or axioms to help guide enterprise users in creating cloud-based apps that integrate nicely with enterprise networks with the least amount of gotchas.

Rule 1: Directly connect to a real-world network nexus

This is crucially important. What we mean here is determine the centre of your network first of all and make sure that this element has real network power and isn’t virtual.

VM-based network appliances (firewalls, load balancers etc) are a huge boon for rapidly deploying capabilities to manage, monitor and manipulate network traffic but they also represent a significant pinch point and as such they limit the number of end-hosts that can be supported.

Interoute’s VDC helps here because it enjoys an intimate fusion with Interoute’s carrier-grade MPLS-based IP network that is able to carry both Internet and private network services in parallel with little difference.

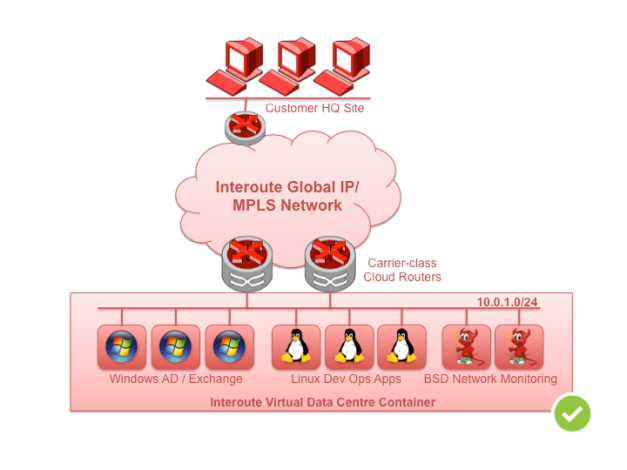

Fig 1. Large networks are fine with direct private network access

In the simplest case, an Interoute VDC customer can simply create an IP-layer subnet as large as needed, add it to their private WAN using the VDC Control Panel, and any established virtual machines are pingable as soon they boot. The VDC Control Panel interfaces through Interoute’s innovative IP-layer API to signal the presence of the subnet, and any participating VPN sites immediately become aware of the cloud service.

No need to add static routes to VM-based routers. No need to configure and troubleshoot IPSec tunnels with complicated keys and encryption policies.

Because the cloud workload communicates directly with the real network, performance is limited only by the CPU and I/O capacity that is available to the actual VM.

Rule 2: Carefully dimension virtual network functions (VNFs)

Even though the ideal situation is to directly attach VMs to the network, sometimes this isn’t possible, or reasonable. The classic case is where one is using VDC to service Internet users and a firewall function is required.

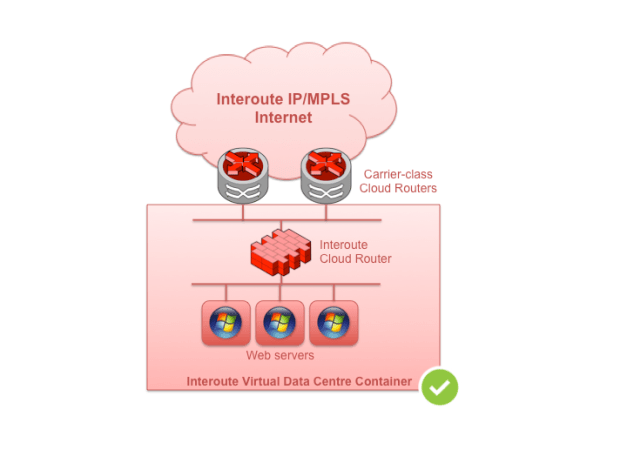

Fig 2. Keep Virtual Router served networks smaller

Without involving any existing private WAN (for which there are optimal solutions using Interoute’s Internet Central service), the easiest option is to place Interoute’s Cloud Router at the head of the subnet. It can seamlessly connect the private cloud network with the Internet and provide the following features:

- simple session-based firewall logic,

- simple session-based load-balancing logic

- outbound source-based NAT to masquerade the internal addresses of outgoing sessions

- inbound port-forwarding – to present explicitly nominated multiple internal services on the same IP externally

Alternatively, customers can choose from specialist vendor firewalls or network functions. Regardless of which option is chosen, however, it’s important to realise that the network function is virtual and it’s implemented on a VM, just like any other ordinary workload. Let’s call it a Virtualised Network Function, or a VNF-powered app.

This means that a packet transmitted from your Linux-based Apache web server for example, consumes I/O and CPU resource not just on that VM, but also on the VM hosting the firewall function through which the packet needs to pass.

Technology aside, the virtual compute resources available in VDC are uniform and equal so it’s easy to see how this can become a bottleneck when a VNF has to function for a large number of Virtual Machines.

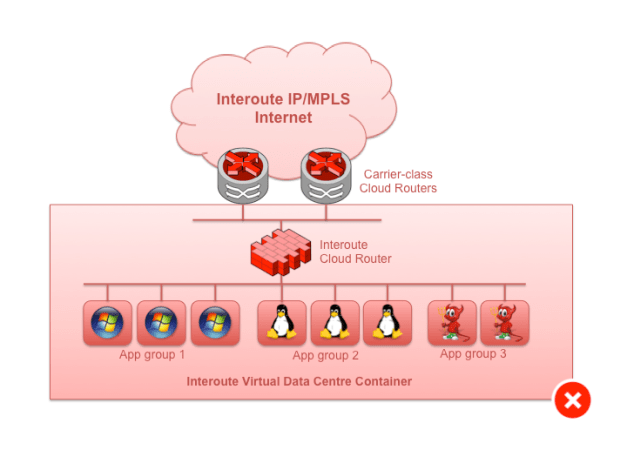

Fig 3. Don’t do this: large subnet dependent on single VNF

VNFs are extremely useful however, so in order to not get trapped in a situation where excessive resources are used, and VM capacity is wasted, we need another rule.

Rule 3: Divide and conquer VNF-powered App Groups

Separate VNF-powered apps into logical groups in order to avoid overpowering VNFs and wasting VM resources. Avoid creating large monolithic subnets with lots of VM server attachments when you know that the gateway serving the subnet is virtual.

The knee-jerk response to this is usually to break things into multiple subnets, but, of course, because you still need the VNF, you end up multi-attaching to that. So that doesn’t really fix the problem.

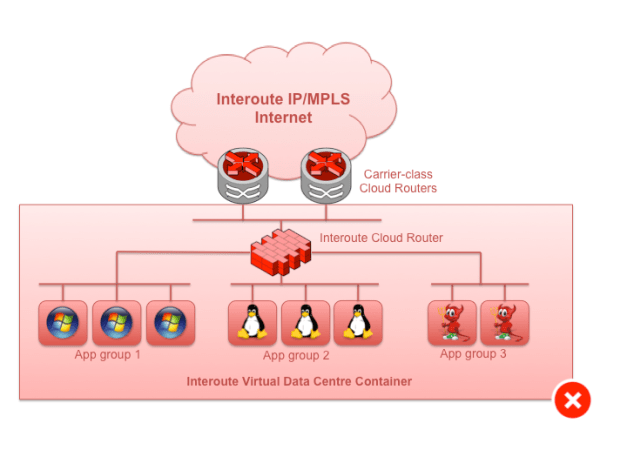

Fig 4. Don’t do this: Multiple small subnets, but the VNF is still acting as the network nexus

Instead of that, replicate the VNF function for each of the subnets / application groups that you want to service. The traditional network function vendors haven’t quite got their heads around this subtlety in the move to a software model.

Fig 5. Do this: separate VNFs for each logical app

Rule 4: Avoid Dual-homing VMs

This one is a tough one. The usual motivation is a separate out-of-band management access channel to the VM. The problem with multi-homing VMs, though, is that you’ve then got to manage the routing table on the VM and that can easily descend into static route hell.

Static routes are not great because they are just that – static. They don’t adjust with topology, and we need hacks such as VRRP to make them useful. Secondly, you now have two different addresses and associated routing for a single element. That would be fine, but the VM itself has only a single routing table, so asymmetric routing is inevitable for at least one of the two functions the VM is providing: either the main app, or the management channel. Asymmetric routing is the sworn enemy of enterprise-grade firewalls that maintain session state, and getting round that means NAT. Complicated.

So when considering out-of-band management access, remember that the NICs we’re talking about on VMs now are, of course, virtual. They don’t fail in the same way real hardware fails, so having a diverse path for management isn’t as useful as one might think. The usual failure modes will see a hypervisor crash or die, which will cause the whole VM to have to restart or be re-homed. Virtual switch failure is rare, and real switch failure typically means a few seconds out while traffic reroutes.

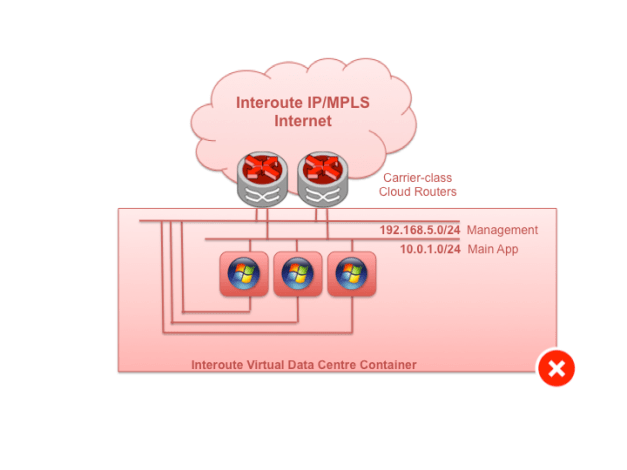

Fig 6. Try not to do it: multi-homing VMs

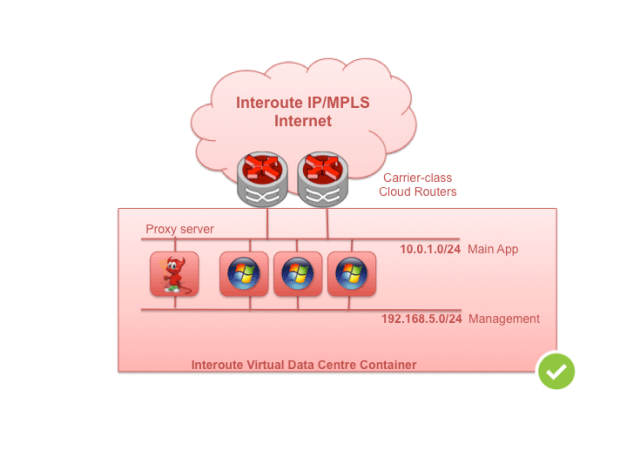

If you absolutely can’t live without a backside NIC for management or other access and your VM is based on a standard OS that makes no provision for this, avoid any additional static routing on the alternate interface. Access the VM through a proxy function on another VM. This way you don’t need to maintain the routing table on the host and the asymmetrical routing problem goes away. The alternate interface can be considered what network guys call, link-local scope only, as in – it doesn’t have a gateway.

Fig 7. Better: use a proxy server

Summary

Interoute’s VDC gets you off to a flying start with either private network or public Internet topologies. Unlike most cloud providers, there’s no need to spend time defining over-the-top tunnel configurations to connect different sites together. And where you need more than a simple direct connection to a private network, such as in Internet scenarios, a fully-featured VM-based cloud router, or VNF, can be a powerful tool. Unlike in physical environments though, where network functions are expensively implemented, often on custom hardware and thus shared amongst as many client hosts as possible, virtual network functions share the same pool of resources – CPU, RAM, I/O – as our mainstream apps. Applying some of the simple steps listed here helps us make the most of mixed network and compute environments.